- PRODUCTS

- RECORDERS

- SUPPORT

| Home > Tutorials > Choosing the Right Mezzanine for COTS Systems |

Because they are effective in handling the extreme breadth of I/O functions required, open architecture embedded systems for military or aerospace applications have always relied on mezzanine, or daughter cards, to provide flexibility and modularity. Thanks to the widespread adoption of industry standards defining these mezzanine products, carrier boards can accept mezzanine boards from a wide range of vendors that specialize in niche technologies and interfaces.

Today, three popular mezzanine standards dominate the embedded market: PMC (PCI Mezzanine Card), XMC (Switched Mezzanine Card), and FMC (FPGA Mezzanine Card). These mezzanines support all popular industry architectures including VME, VXS, OpenVPX, CompactPCI, and CompactPCI Serial for both 3U and 6U form factors and across a range of cooling techniques and ruggedization levels. Each of these three mezzanine standards presents a unique set of advantages and shortcomings that we will discuss in this article.

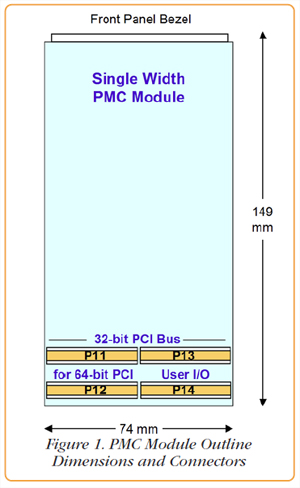

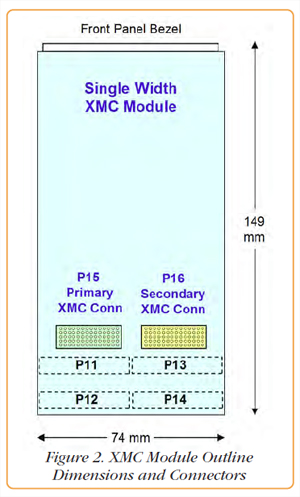

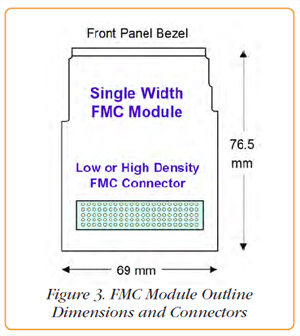

PMC Module StandardsDefined under the IEEE 1386.1 standard over 15 years ago, PMC uses the mechanical dimensions of the CMC (Common Mezzanine Card) from IEEE 1386 with the addition of up to four 64-pin connectors to implement a 32- or 64-bit PCI bus as well as user I/O. As shown in Figure 1, two connectors, P11 and P12, handle a 32-bit PCI bus, which is expandable to 64 bits with the addition of the P13 connector. Operating at PCI bus clock speeds of 33 or 66 MHz, the 32-bit interface delivers a peak transfer rate of 132 or 264 MB/sec respectively, and twice that for a 64-bit interface. A later extension called PCI-X boosts the clock rate to 100 or 133 MHz for a peak transfer rate of 800 or 1000 MB/sec for 64-bit implementations. The optional P14 connector supports 64 bits of user-defined I/O. As interconnect technology for mass-market PCs began shifting away from parallel PCI buses towards the faster PCIe (PCI Express) gigabit serial links, the need for a similar migration for mezzanine modules became apparent. XMC Module StandardsXMC modules are defined under VITA 42 as the switched fabric extension of the PMC module. It requires either one or two multipin connectors called the primary (P15) and secondary (P16) XMC connectors shown in Figure 2. Each connector can handle eight bidirectional serial lanes, using a differential pair of pins for each direction. The VITA 42.3 sub-specification defines pin assignments for PCIe, while VITA 42.2 covers SRIO (SerialRapidIO). Typically, each XMC connector is used as a single x8 logical link or as two x4 links, although other configurations are also defined. Data transfer rates for XMC modules depend on the gigabit serial protocol and number of lanes per logical link. FMC Module StandardsDefined in the VITA 57 specification, FMC modules are intended as I/O modules for FPGAs. They depart from the CMC form factor, with less than half the real estate, as shown to scale in Figure 3. Two different connectors are supported: a low-density connector with 160 contacts and a highdensity connector with 400 contacts. Connector pins are generically defined for power, data, control and status with specific implementation depending on the design. FMC modules rely upon the carrier board FPGA to provide the necessary interfaces to the FMC components. These can be singleended or differential parallel data buses, gigabit serial links, clocks and control signals for initialization, timing, triggering, gating and synchronization. For data, the high-density FMC connector provides 80 differential pairs or 160 single-ended lines. It also features ten high-speed gigabit serial lanes, with differential pairs for each direction. Transfer Rate ComparisonRegarding the data transfer rates, PMC and XMC modules are well determined by the interface standard installed. Nevertheless, these rates are often affected by the carrier board in several ways. A shared PCI bus supporting other traffic will effectively block all transfers to a PMC until it is granted use of the bus. For example, this problem occurs on dual PMC SBCs (single board computers) where the two PMCs often share the same local PCI bus. Also, when PMCs are installed on simple 3U CompactPCI carriers, the common PCI backplane must be shared across all boards installed in the card cage. Lastly, a carrier card or adapter that presents a lower speed PCI bus to the PMC module will force the module to operate its interface at that lower speed. |

|

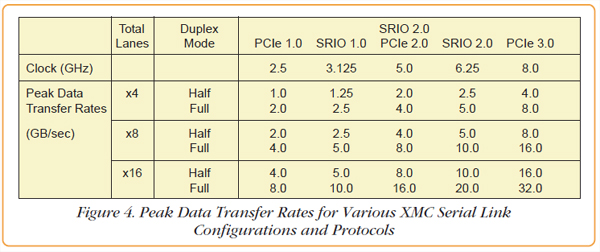

XMCs have an inherent data rate advantage over PMCs because they use fast gigabit serial links. Even the slowest x4 PCIe 1.0 interface still matches the fastest PCI-X 64-bit bus at 133 MHz. However, a major system-level implication for the gigabit serial interfaces is that they are dedicated pointto-point links and are not subject to the sharing penalty of parallel buses. Figure 4 shows the peak data transfer rates for PCIe and SRIO for different width gigabit serial links.

Ultimately, any system will have CPU and memory bandwidth limitations, but new multicore processors and chip sets feature more than 40 PCIe Gen3 lanes, each handling 1 GB/sec, and four DDR3 memory banks, each delivering transfer rates of 12.8 GB/sec. In these systems, a dedicated x8 PCIe link between the XMC and the system supports a respectable transfer rate of 8 GB/sec.

Unlike PMCs and XMCs, FMCs do not use industry standard interfaces like PCI or PCIe. Instead, each FMC has a unique set of control lines and data paths, each one differing in signal levels, quantity, bit widths and speed. At a 1 GHz data clock rate, the 80 differential data lines can deliver 10 GB/sec. At a 5 GHz serial clock rate, the ten gigabit serial lanes can deliver 5 GB/sec. In fact, specification design goals for FMCs are actually twice these rates.

FMC modules are less than half the size of PMCs and XMCs, and less real estate means less freedom to strategically place components for shielding, isolation and heat dissipation. For example, A/D converters are extremely sensitive to spurious signal pickup from power supplies, voltage planes, and adjacent copper traces. Often, the required power supply lines must be re-regulated and filtered locally on the same board as the A/D converters for best results. Arranging this circuitry on a small FMC module can be challenging. Even though XMC modules have more components, they can often be rearranged more easily because of the larger board size.

FMCs require the FPGA to reside on the carrier board, while FPGA-based XMC modules include the FPGA on the mezzanine board. Schematically, the overall circuitry between the front end and the system bus may be nearly identical, but the physical partitioning occurs at two different points.

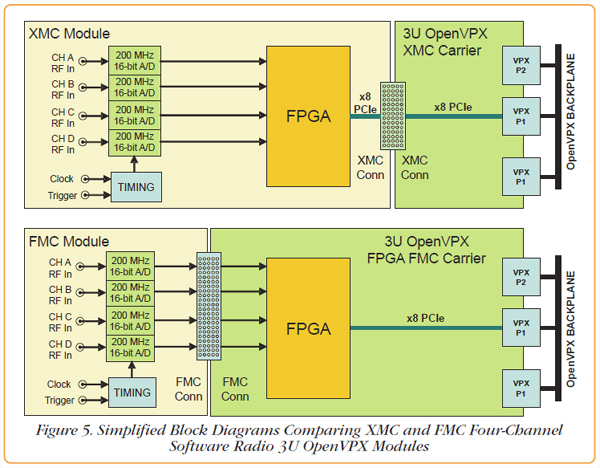

To illustrate this, Figure 5 shows two different implementations of a four-channel A/D converter software radio module for 3U OpenVPX. Notice that both block diagrams feature the same A/D converters and FPGAs, and provide the same x8 PCIe interface to the OpenVPX backplane. The XMC implementation on top uses the XMC connector between the FPGA and the backplane, while the FMC implementation below uses the FMC connector between the A/Ds and the FPGA.

Because most of the power is consumed by the FPGA, comparing power dissipation between FMC and XMC modules will strongly favor the FMC. However, since the same resources are used in both block diagrams, the overall 3U module power dissipation is nearly identical.

In a comparison between PMC and XMC or FMC modules, there is one additional factor to consider: Gigabit serial interfaces implemented in FPGAs typically consume more power than parallel bus interfaces. So when considering PMC products versus XMC/FMC products for a four-channel A/D converter module, the PCI bus of the PMC module will draw less power than a PCIe link. Of course, the extra power required for PCIe delivers tremendous benefits in both speed and connectivity.

Each FMC presents a unique electrical interface that must be connected to an FPGA configured precisely to handle that specific device. This may be a reasonable solution if the FMC module and the FMC carrier are both supplied by the same vendor, and the FPGA on the carrier is pre-configured by the vendor for the specific FMC module installed.

For 6U carriers with two or three FMC sites, the FPGAs must be configured to match the specific combination of each of the FMC modules installed at each site. This FMC-to-FPGA dependency creates a potentially large number of combinations resulting in configuration management and customer support issues.

When a customer purchases an FMC module from one vendor and an FMC carrier from a different vendor, additional challenges arise. Someone must develop custom FPGA configuration code for the carrier to support the FMC module. Perhaps the FMC vendor will agree to develop code for a third-party carrier. Perhaps the carrier vendor will develop code for a third party FMC module.

Failing either of these strategies, the user must configure the FPGA. In this case, both the FMC module and the FMC carrier are third party products with two different technical support resources. If something doesn't work, it can be difficult to resolve problems in an efficient and effective way. And, if either the FMC module vendor or the FMC carrier vendor should revise his product, it may affect the interoperability of the two boards.

Perhaps the most challenging aspect of FMCs is the development of software drivers and board support libraries covering the myriad combinations of modules and carriers. Unless these are supplied from a single vendor who also supplies the FMC module and carrier, the same support and development issues discussed above for FPGA development may arise.

In contrast, PMCs and XMCs use industry standard system interfaces, typically PCI and PCIe, with a strong trending towards PCIe. Nearly all recent embedded systems take advantage of the widely-adopted PCIe standard for interconnecting system elements. This includes VXS, OpenVPX, and Compact-PCI, as well as high-performance PC platforms using PCIe cards installed in motherboard expansion slots. These factors reduce dependency on the XMC vendor and problems resolving multi-vendor responsibility.

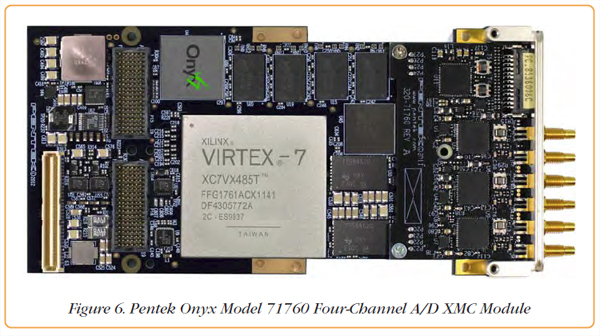

Figure 6 shows an XMC module featuring the latest Virtex-7 FPGA technology in a four-channel 200 MHz 16-bit A/D software radio module. It is equipped with dual XMC connectors, each capable of supporting eight lanes of gigabit serial links. The standard product features an x8 PCIe Gen 2.0 interface, and it's optionally available with Gen 3.0 to boost system transfer rates to eight GB/sec.

Notice the cutouts along the sides of the product to accommodate surrounding metal structures for conduction cooling, making the product ideal for both lab and deployed military systems such as unmanned vehicles.

The product is also available in PCIe, CompactPCI, and OpenVPX form factors through carrier board adapters, and is supported with the Pentek ReadyFlow Board Support Libraries for Windows and Linux. Customers wishing to add custom IP for signal processing or special algorithms can choose the GateFlow® FPGA Design Resources containing full VHDL source code and the complete FPGA project. However, many customers take advantage of the rich collection of installed FPGA functions which address communications and radar applications, thereby saving the need for custom FPGA development.

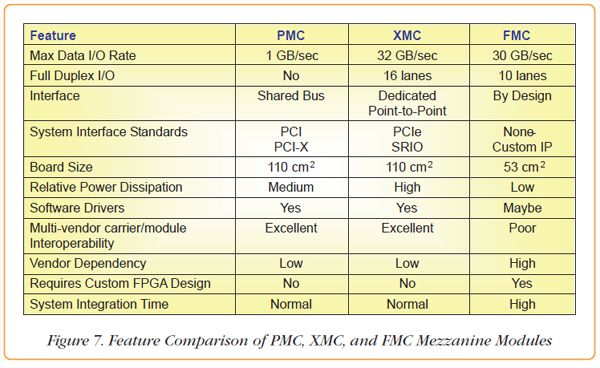

Figure 7 summarizes the points discussed in this article comparing PMC, XMC and FMC modules. Military and aerospace system integrators must weigh the pros and cons of each, remembering that all three are available in rugged versions suitable for deployment in severe environments.

If lowest power is the driving factor, PMCs may still be the right choice, especially if interface speed is not critical. With hundreds of vendors and thousands of products, PMCs offer the most of specialized I/O solutions.

FMC modules can be quite effective as long as the same vendor supplies both the mezzanine module and the carrier with tested and installed FPGA configuration code. Otherwise, XMC modules offer excellent solutions for embedded systems due to the proliferation of links, carriers, backplanes, and adaptors all based on PCIe. This eliminates the need for a custom FPGA development effort, minimizes product support issues, and speeds development cycles.

| CONNECT ON SOCIAL: |

|

|

|

|