- PRODUCTS

- RECORDERS

- SUPPORT

| Home > Pipeline Newsletters > Cooling Strategies for Real-Time Embedded PC Systems |

| |||

|

Fall Vol. 22, No. 2 | |||

|

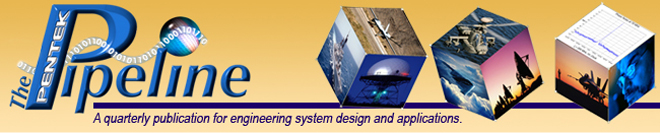

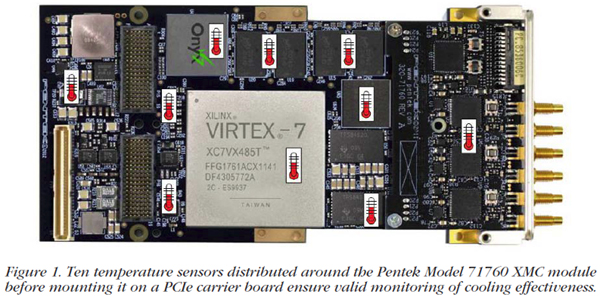

Cooling Strategies for Real-Time Embedded PC Systems Processors in server-class workstations have become powerful enough to contend for some of the most demanding real-time embedded applications. Integrating these costeffective platforms with the open-architecture board-level products required for government and military applications poses significant challenges for system designers. Adequate cooling of these power-hungry boards to assure reliable system operation is a critical need. Workstation Benefits and LimitationsServer-class PC workstations offer the benefits of high-end processing, fast data links, efficient power management, deep and fast memory, low latency, and capable network interfaces—all in a larger desktop or rackmount chassis. Powerful CPUs like Intel's multicore Xeon or Core i7 processors are tightly coupled to advanced chipsets for PCIe expansion slots, multichannel DDR3 memory and 1 GbE network connections. These key factors offer specific benefits to real-time applications, where high-rate continuous and sustained data transfers between system components must be guaranteed. Driven by the large markets for enterprise computing, web and file servers, and cloud computing, prices for these systems have become extremely competitive, compared to alternative embedded-system architectures such as VME, CompactPCI and OpenVPX. Unlike these traditional platforms, PC servers fail to provide the forced-air or conduction-cooling facilities necessary to remove the 40 to 80 watts of power typically consumed by boards containing DSPs, FPGAs, A/Ds, and D/As that are essential to many real-time embedded systems. Instead, server motherboards rely on custom heat-sink assemblies often coupled through heat pipes to finned active-cooling radiators to remove heat from the CPU and chipsets. Hot-swap disk drives mounted in accessible front-panel bays are cooled by mid-chassis fans that pull air in from the front. Power supplies contain one or more thermostatically-controlled fans to maintain safe operating temperature limits. Two or more rear panel case fans help to evacuate hot air inside the chassis. But the site for expansion card slots in the left rear quadrant of a server chassis has no standard provision for cooling. Furthermore, the rear PC panels of the slot cards act as barriers, blocking air from the mid-chassis fans that might otherwise flow across the card surfaces. Indeed, this region of the server chassis acts as a closed box, trapping heat and causing unsafe temperatures for components on these cards. Cooling By DesignOne of the first steps in evaluating any cooling solution is accurate measurement of its effectiveness. Motherboards often include extensive temperature monitoring facilities of the CPU and other critical components as part of the BIOS and system driver software. Disk drives report temperatures through SATA and RAID controller software utilities. Likewise, embedded-board vendors should incorporate several temperature sensors at key locations around the board to ensure that no hot spot is missed. Also, many high-dissipation devices like DSPs and FPGAs include junction temperature sensors as part of the silicon die. Monitoring these chip sensors is essential to ensure sufficient cooling for maintaining junction temperatures within the manufacturer's limits.  As an example, Figure 1 shows the Pentek 71760 Quad 200 MHz 16-bit A/D card with ten temperature sensors, including junction sensors for the Virtex-7 FPGA and the PCIe switch. A software utility reports all ten sensor readings to help score different thermal management techniques during system integration and testing. After deployment, independently programmable threshold settings allow alarm notification to the system through a PCIe interrupt and a front panel LED—if any sensor exceeds a pre-determined temperature. This is extremely valuable as an alert to end users of a fan failure or airflow blockage during operation.  XMC and PMC, both industry-standard mezzanine modules for embedded systems, can be adapted to PC servers through the use of PCIe carrier cards that bring the PCI or PCIe interface of the modules down to PCIe edge fingers for the motherboard card slots. In these boards, most of the heat is dissipated on the inside surface of the module which faces the carrier card. One effective solution, shown in Figure 2, is to provide a wrap around copper or aluminum structure for the XMC module that pulls heat from the hotter inner components and dissipates it on the outer surface of the board where more airflow is available. Effective Fan Strategies

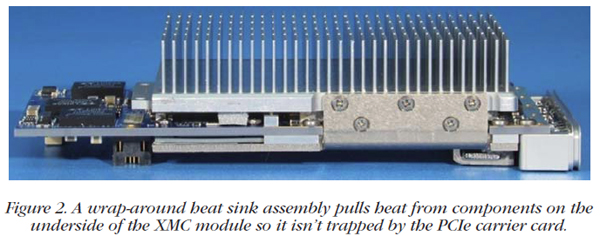

One or more case fans placed directly above the expansion cards blowing downward between the cards is one of the most effective cooling techniques. Since this type of fan is not provided in most server chassis, a special bracket or mounting plate must be fabricated that attaches to the chassis. This requires a chassis with a height of at least 4U to provide vertical clearance for the fan itself and a large enough gap under the top cover to allow adequate air intake.  Figure 4 shows a single 120 mm case fan mounted on a custom plate in a 4U rackmount chassis. When properly implemented, this type of fan can provide up to 15O C of additional cooling. Card RelocationAnother strategy for optimal cooling is simply moving the expansion cards to slot positions that offer the best airflow or clearance. Often, the best slot is the end slot towards the center of the chassis. Although a server motherboard typically has a maximum of seven card slots, the choice of slots is often limited by other considerations. Each one can differ in its interface (PCI or PCIe) and in the number of PCIe lanes. The number of PCIe lanes defines the maximum potential data transfer bandwidth of a card slot, so cards requiring the highest transfer rates are only supported by certain slots. In addition, the PCIe chipset often imposes certain maximum lane restrictions on combinations of slots used. For example, an x16 slot could be reduced to x8 if another card is plugged into the adjacent slot. Therefore, the system designer must often trade-off between the card slot positions and the data transfer rates to achieve the best cooling configuration. SummarySuccessful thermal management requires multiple strategies, both at the board design level and during system integration. Embedded cards should be equipped with thermal sensors and software monitoring utilities. Taking advantage of these sensors, in addition to sensors for motherboard components and disk drives, can help during system integration and testing, and benefit the end user. Conducting heat from hot components like FPGAs through metal structures to fins that are exposed to airflow is essential for maintaining silicon-junction temperature margins. Fans incorporated within the card heat sinks, or mounted separately, provide targeted air flow in the poorly-ventilated card slot area of most server chassis. Finally, card slot relocation can make a difference. Experience is invaluable in the often elusive and frustrating task of cooling embedded PC systems. Applying these common-sense strategies will help system designers find the best solution. | |||

| CONNECT ON SOCIAL: |

|

|

|

|